Our overall goal as a company is to make computational materials discovery available to more people. We have built our simulation platform PhinOS to achieve this in two ways:

PHIN has made great strides in addressing the first item, and with the release of PhinOS’s Virtual Scientists, we are now also solving the second.

For decades, materials simulation has largely been the domain of computational materials scientists (CMSs). The main barrier is the deep domain expertise required to produce meaningful computational results. This typically involves deep knowledge of various domains, including computational modeling, data management, ML infrastructure management, and simulation engineering/scripting.

PHIN’s Virtual Scientists are AI agents that combine intricate knowledge of the computational materials science problem space, along with intricate knowledge of the PhinOS orchestration platform. The merger of the two results in a conversational interface that is not only able to provide known answers to materials questions, but also suggest and create experiments to produce new knowledge when answers are unknown.

Our ultimate aim is for a materials engineer or computational manager to interface with our PHIN Virtual Scientist in identically the same way they’d interface with a human computational scientist today. Except instead of budgeting tasks and waiting for results to be executed, our customers get answers to questions through orchestrating virtual experiments within PhinOS.

For computational teams, this frees up bandwidth for broader experimental design or exploration tasks, while upstream teams are able to run their own CMS experiments with little to no external involvement, and get near-immediate results at extremely low costs.

Our approach to making computational materials discovery accessible revolves around the powerful, integrated capabilities of PHIN's Virtual Scientists within the PhinOS platform. This is achieved through two interconnected facets:

Tying these two aspects together in a user experience that is seamless requires striking a fine balance between several User Experience (UX) needs, and we’ve had to make certain assumptions and tradeoffs to enable broad adoption, regardless of a user's comfort level with CMS. Our goal is to allow users at all levels to interface with Virtual Scientists the same way that they would a human CMS. To achieve this, the agentic tool responses need to mirror what a real Computational Materials Scientist would share when demonstrating their conclusions.

Today, there are two extremes for agentic conversational interfaces. On one extreme, we have purely textual agents like ChatGPT, where the entire interface is the agent. On the other extreme, we have human-in-the-loop primary interfaces, where the agent acts as an assistant whose work is double-checked by the user in a primary interface, similar to agentic Integrated Development Environments (IDE) like Cursor.

Neither approach is appropriate for our goals. We need to strike a balance of not requiring that users understand complex CMS workflows to use our product, while remaining informative enough to provide confidence in the results, and how they were derived. As such, we created a third option that combines an agentic-first interface with rich interactivity built upon our platform’s core product concepts. Some examples of interactivity includes a task sandbox view, which shows the hierarchy of orchestrated tasks, and gives users an intuitive way to understand the methodology behind the creation of new materials knowledge.

This sandbox can be manipulated through interacting directly with the agent, allowing users to tweak the methodology behind creating this new knowledge, without requiring users to know anything about how this knowledge is produced.

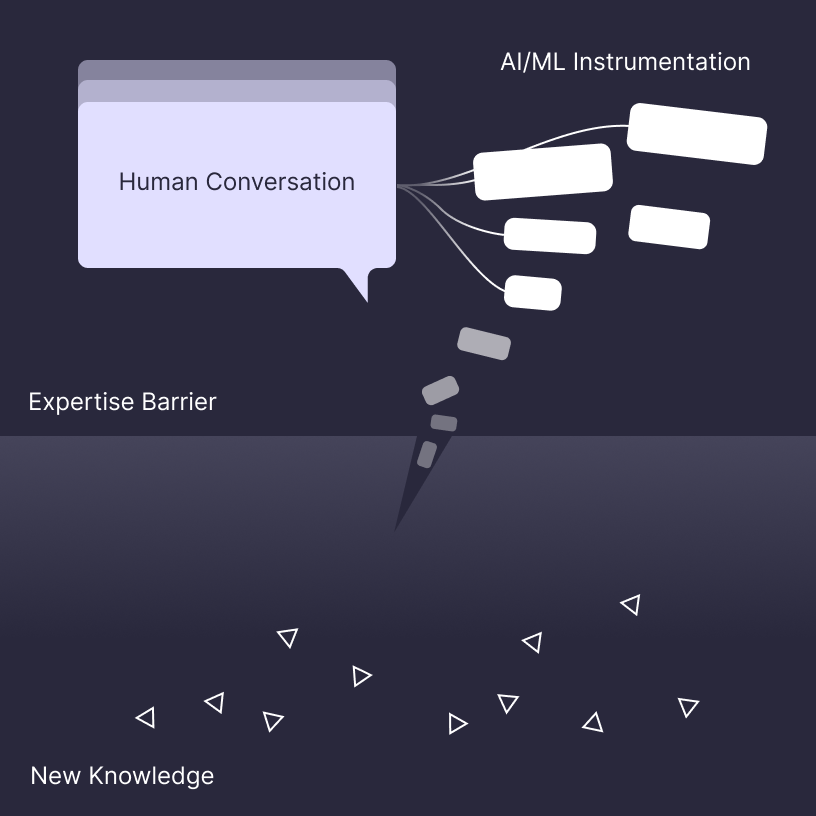

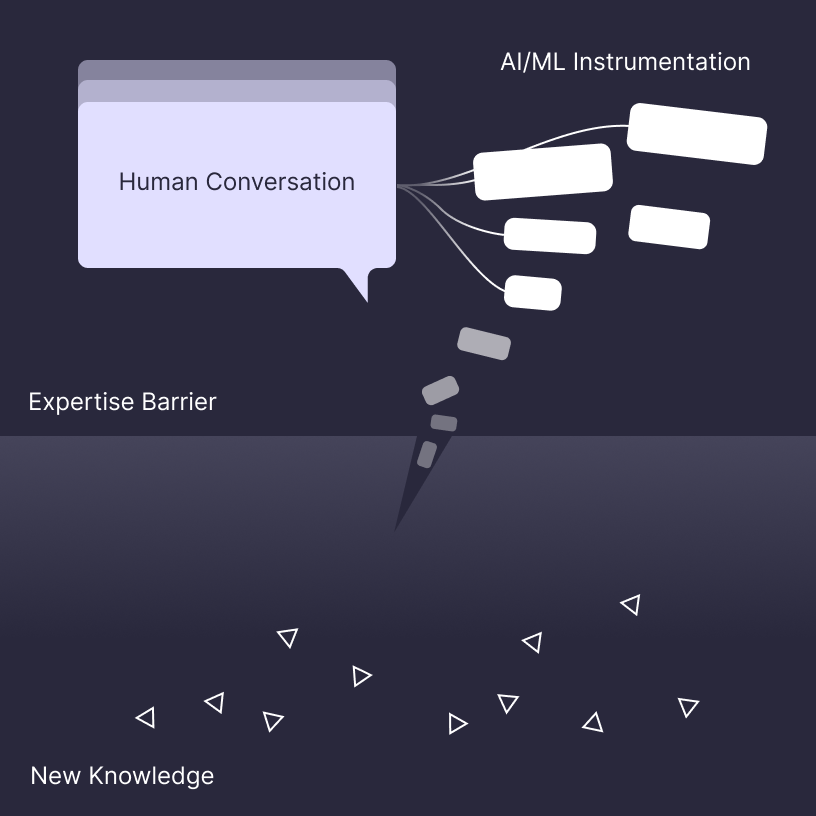

We call this new paradigm “Instrumented conversational UI”, where the primary mode of interaction is still agentic, but it acts as a conversational abstraction on top of the complex instrumentation workflows beneath the surface.

Two UX loops are facilitated using our instrumented conversational UI. Context building through problem definition, and methodology building through a conversational walkthrough of the solution.

The result is an agentic interface that meets our initial goals. While still a new feature, tests with computational materials scientists showed that the functionality helped support onboarding, and was useful in bootstrapping new experiments.

One challenge we currently face is that the scope of the results loop is very large, as there are many different analysis methods the agent can use. Through continued refinement, our agents will learn to intuit specific methods based on the context provided and historical analysis methods to create a self-improvement loop.

As agentic systems evolve, the jury is still out whether more human-in-the-loop is necessary, or less, but we are positioning our platform to adopt both paradigms.

We would love to work with you to find out what works.